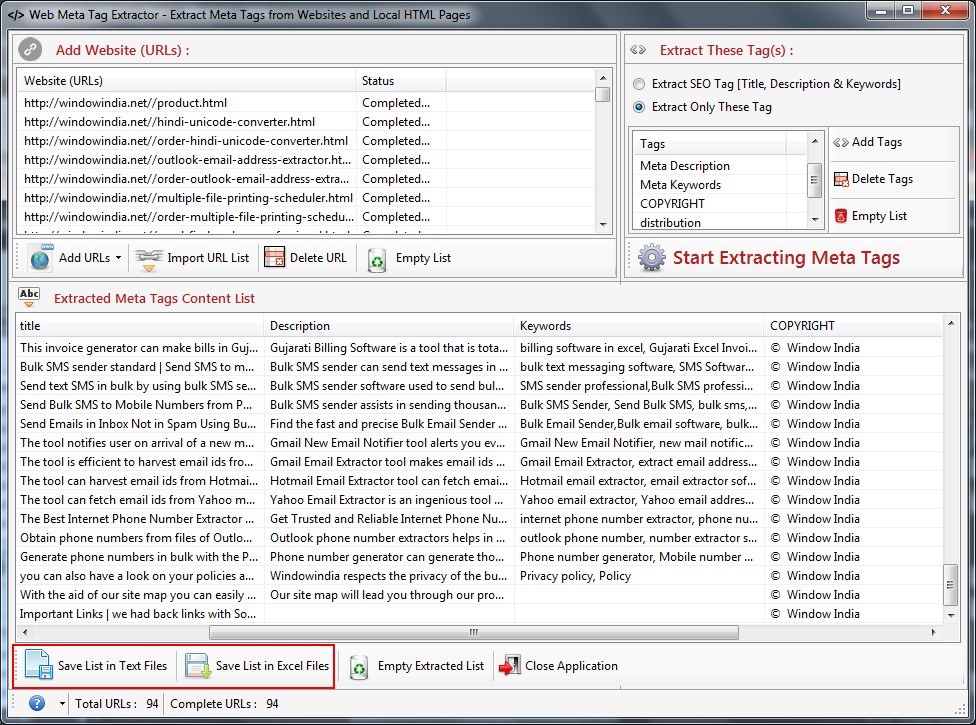

This can then be opened up in Numbers/Excel/Google Sheets so you can work out the redirects, and also copy the metadata out to paste into the SEO data plugin of the new site. Let that scan all your pages and click ‘download CSV’ under the list, and you now have a list of all the website’s pages, with their URLs, meta titles and meta descriptions. Go to and paste the list of URLs into the box on the left. Open the urllist.txt file up (Should automatically open in Notepad on Windows and Textedit on Mac) and copy all the URLs. Unzip these files to a folder and you’ll then see your sitemap in a variety of formats. Once it’s scanned all your pages, scroll down a bit and download the zip file containing all your sitemaps. Go to, paste your website address into the bar and click start. Luckily there are two free tools that make this quick and easy. This can be a time consuming task to do by hand! This can then be used to ‘310 redirect’ old pages to their new versions to help keep search engine rankings and avoid ‘page not found’ errors. Using it from R allows for tapping into its potential while seamlessly operating from one’s environment of choice.When building a new version of a website, we often need to extract the current pages, the page addresses (URLs) and the meta title and description.

The library outperforms similar software in a text extraction benchmark and in an external evaluation, ScrapingHub’s article extraction benchmark. In addition, it must be robust, but also reasonably fast. The extractor aims to be precise enough in order not to miss texts or to discard valid documents. Its features include seamless parallelized online and offline processing, extraction of main text, comments and metadata with several output formats, and link discovery starting from the homepage of a website. Trafilatura is a Python package and command-line tool which seamlessly downloads, parses, and scrapes web page data: it can extract metadata, main body text and comments while preserving parts of the text formatting and page structure. In the tutorial below, we are going to import a Python scraper straight from R and use the results directly with the usual R syntax, thus harnessing its functions for data mining: content discovery and main text extraction. Here is the complete vignette with thorough documentation on Calling Python from R. The package provides several ways to integrate Python code into R projects: Python in R Markdown, importing Python modules, sourcing Python scripts, and an interactive Python console within R. It basically allows for execution of Python code inside an R session, so that Python packages can be used with minimal adaptations, which is ideal for those who would rather operate from R than having to go back and forth between languages and environments. The reticulate package provides a comprehensive set of tools for seamless interoperability between Python and R. But why choose between them when you can choose both? The question “R vs Python, What should I learn?” resonates across the Internet. Although both environments are similar, most people feel they face a choice between the two. Together with Python, it is part of the most popular languages among (data) scientists. R is a free software environment for statistical computing and graphics.

Trafilatura Why choose between R and Python?

Date Tue Category Tutorial Tags web scraping

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed